Top HMI Trends to Watch in 2026

At UXDivers, we’ve always believed that the most powerful technology should feel the most natural.

As we step into 2026, the "Human-Machine Interface" (HMI) is no longer just a screen on a dashboard or a keypad on a factory floor. It has become a sophisticated, living layer of communication between us and the world of embedded devices.

Based on the latest industry shifts and technological breakthroughs, here are the core HMI trends shaping 2026.

For years, we’ve transitioned from clicking physical buttons to issuing basic voice commands. In 2026, we are entering the era of Agentic HMI, where interfaces move from following instructions to understanding intent and context. Unlike a standard voice assistant that simply "sets the temperature" on command, an agentic interface uses environmental data to anticipate your needs.

This shift is driven by Multiagent Systems (MAS), allowing your devices to act as proactive coordinators rather than passive tools.

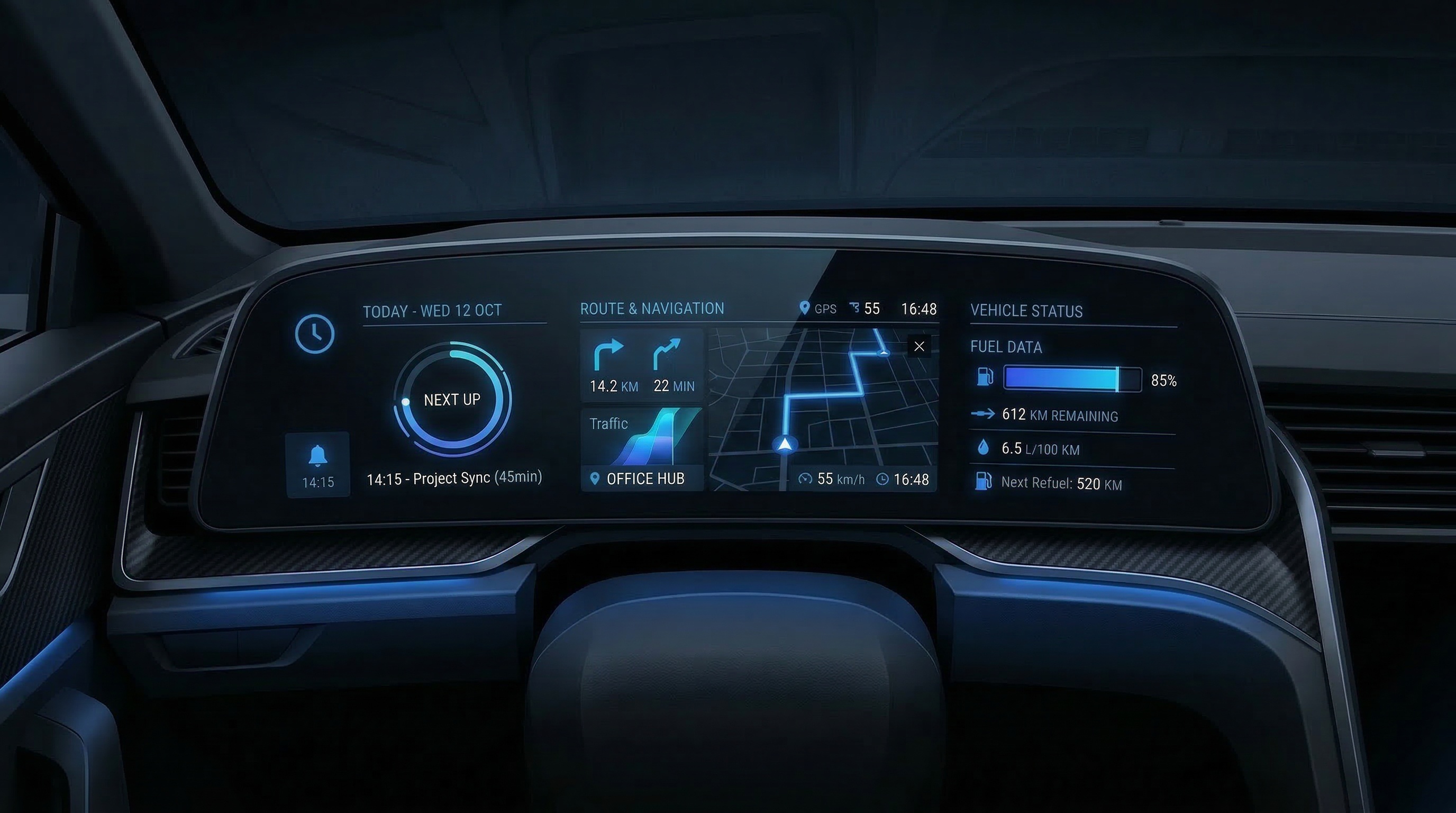

For example, a 2026 digital cockpit, as part of an advanced HMI system, does more than display a low-fuel warning; it cross-references your 9:00 AM calendar invite and real-time traffic to find a station on your route. It then asks if you’d like to stop now to ensure you aren’t late, transforming the interface into a predictive partner that manages logistics before you even realize there's a problem.

One of the most interesting "course corrections" in 2026 is the balance between touchscreens and physical controls.

After years of "screen-only" designs, major manufacturers, led by feedback from brands like Volkswagen, are reintegrating physical buttons for critical functions.

The trend is now Multi-modal Harmony: using Haptic feedback, Gesture control, Human-Machine Interface (HMI), and Physical toggles in a unified way.

The goal for 2026 is to reduce "cognitive load", ensuring the user can perform a task without taking their eyes off the road or the patient.

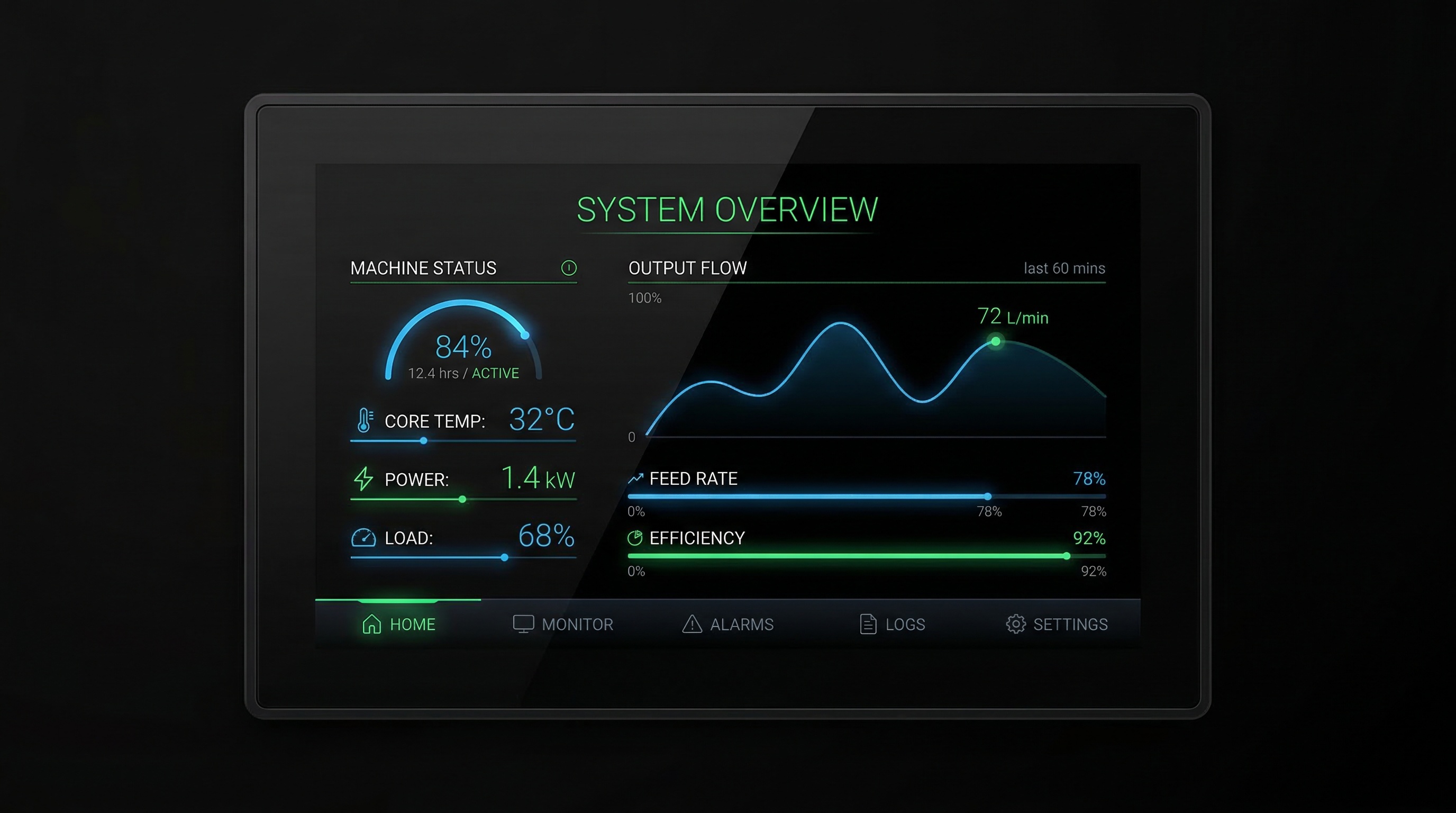

HMIs are becoming more visual than ever.

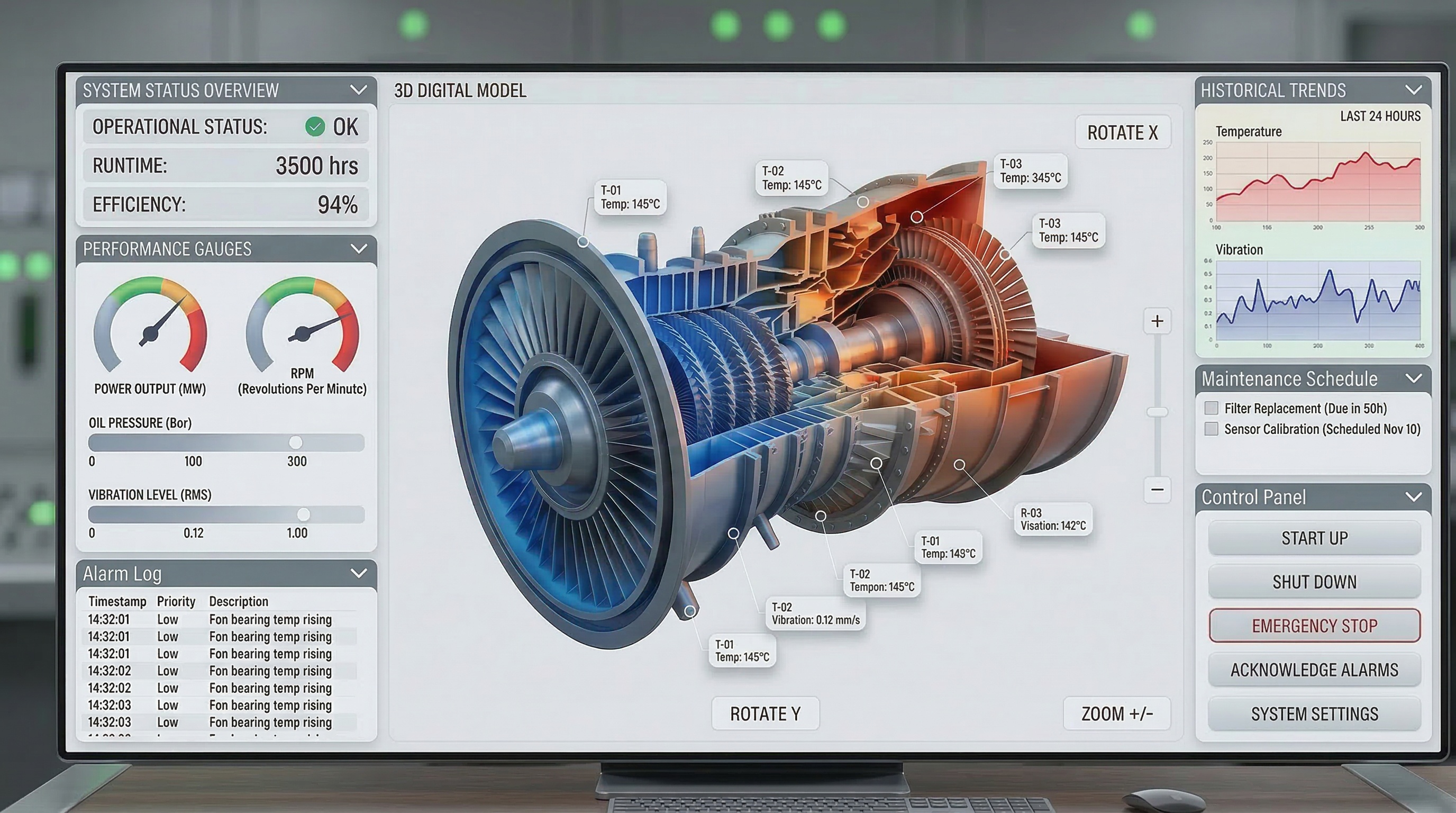

In industrial and automotive sectors, Digital Twin expansion is a top priority, enabling real-time monitoring of a machine's internal state. This means the HMI provides a real-time, 3D rendered replica of the machine's internal state, allowing operators to monitor performance and detect anomalies in the operation instantly.

With tools like Qt’s real-time 3D rendering, designers are creating "Razor-sharp" instrument clusters that show not just data, but a high-fidelity visualization of the environment.

Whether it’s an AR (Augmented Reality) overlay on a windshield or a 3D model of a robotic arm in a factory, operators can now engage with HMIs that move from 2D charts to immersive, spatial experiences, thereby enhancing operation efficiency.

The HMI of 2026 doesn't just respond to you—it knows you. Biometric Intelligence has moved beyond simple unlocking mechanisms and is now a standard pillar of both security and UX. By integrating facial recognition, fingerprint sensors, and even real-time stress-level monitoring, interfaces can now pivot their behavior based on the identity and physiological state of the user.

In 2026, the interface is no longer a static "one-size-fits-all" tool; it is a fluid environment that optimizes itself for the specific human standing in front of it.

Finally, the foundational process of building these interfaces has undergone a radical transformation. In 2026, AI-native development platforms have become the indispensable backbone of HMI creation, effectively collapsing the traditional silos between design and engineering. Teams at firms like UXDivers have transitioned into "forward-deployed engineers," leveraging AI to bridge the gap from Figma to production-ready QML or C++ code almost instantly.

By utilizing generative coding assistants and automated UI-to-code pipelines, operators have seen the development cycle for complex, mission-critical HMIs compressed from months to mere weeks. This shift doesn't just save time; it empowers teams to focus on high-level User Experience (UX) experimentation and pixel-perfect finishes that were previously cost-prohibitive. In this new era, the "hand-off" is dead—replaced by a continuous, AI-augmented flow that ensures the final product is as sophisticated as the vision behind it.

The "Software-Defined" trend has reached its peak maturity. In 2026, we have moved past the era where a product's interface was "baked in" at the factory. Today, hardware is fully decoupled from interaction logic, allowing the HMI to exist as a flexible software layer rather than a static component.

This shift facilitates seamless Over-the-Air (OTA) updates that go far beyond simple bug fixes. A vehicle or industrial machine can now receive a completely redesigned UI layout, brand-new gesture controls, or updated AI personality modules years after it was manufactured. By treating the HMI as a living platform, companies are effectively eliminating "Day 1 obsolescence." This not only extends the product’s lifecycle but allows the user experience to evolve at the speed of software, ensuring the machine stays as modern as the latest smartphone.

To minimize latency and bolster data privacy, HMI intelligence in 2026 has shifted heavily toward "the edge" (on-device processing). This move away from the cloud allows for instantaneous decision-making without the risk of data breaches or connectivity lag. Edge-AI engines now proactively predict operators' needs, reorganizing screen content in real-time based on the specific task at hand.

Supported by continuous real-time monitoring, these interfaces ensure a seamless experience by anticipating friction points. For example, if a factory sensor detects a subtle, out-of-spec vibration in a turbine, the HMI doesn't just trigger an alarm; it automatically surfaces the specific diagnostic tools and maintenance manuals before the operator even begins their search. By moving from a "search and find" model to a "predict and present" flow, 2026 HMIs are drastically reducing cognitive load and response times in high-pressure environments.

In 2026, HMI design is being held to strict sustainability standards, particularly under the EU’s updated Ecodesign for Sustainable Products Regulation (ESPR). This shift has moved "Green UX" from a marketing buzzword to a core engineering requirement. Designers are now implementing power-efficient UI architectures that prioritize system-level "Adaptive Dark Modes" and OLED-optimized layouts, which drastically reduce energy consumption by dimming individual pixels.

Beyond the software layer, hardware is evolving to include high-brightness, low-energy panels that help manufacturers meet strict net-zero corporate commitments. We are also seeing the integration of Digital Product Passports (DPP) within the HMI software, providing users and regulators with real-time transparency regarding the device's carbon footprint and material recyclability. By optimizing everything from lightweight code to hardware luminosity, 2026 interfaces prove that high-performance visuals can coexist with environmental responsibility.

As interactive robots move beyond the factory floor into high-traffic retail and hospitality settings, the HMI has evolved into the "face" and social anchor of the machine. In 2026, these interfaces are designed around Industry 5.0 human-centric principles, prioritizing safe and intuitive interaction through multi-modal signaling.

Modern cobots now utilize Intent Visualization, where the HMI communicates the robot’s next move before it happens. This includes displaying a "path-of-travel" directly onto the floor or screen, so nearby humans can naturally anticipate its trajectory. Complemented by light-based signaling (using smart LED arrays to communicate status or "mood"), these interfaces ensure that whether a robot is delivering a room-service tray or restocking a shelf, its presence feels predictable, safe, and collaborative rather than intrusive.

With the rise of hyper-connected devices, security has evolved from a background process into a core UI element. In 2026, HMIs are integrating Zero-Trust frameworks directly into the user flow, moving away from the "perimeter defense" model. This means that multi-factor authentication (MFA) and encrypted protocols are no longer intrusive "pop-ups" that disrupt the workflow; instead, they are woven into the interaction design.

By utilizing continuous behavioral biometrics—such as analyzing touch patterns, gait, or facial recognition in real-time—the HMI can verify identity without requiring the user to stop their task. This "invisible security" ensures that mission-critical systems, from power grids to surgical robots, remain unbreakable. In this new landscape, the interface itself serves as the primary gatekeeper, ensuring that every micro-interaction is authenticated, authorized, and encrypted by default.

At UXDivers, we’re not just observing these trends, we’re actively implementing them in real-world HMI products.

We collaborate with teams building embedded interfaces, medical devices, and industrial systems, where performance, clarity, and reliability are non-negotiable. Our approach combines User Experience, front-end engineering, and system architecture, allowing us to take products from early UX exploration all the way to production-ready implementations in Qt, .NET, and embedded Linux environments.

What we consistently see is that the biggest challenges are no longer purely technical or purely design-related, they emerge at the intersection of both. Bridging that gap is where most HMI projects succeed or fail.